I participated in Singapore's biggest student hackathon

This past weekend, I along with my friends Manas, Sparsh and Shrivardhan participated in Hack & Roll 2024 at the National University of Singapore.

Over 24 hours, we built LookOut. LookOut is a Multimodal AI enabled IoT device that allows a visually impaired person to interact with their environment. This blog will be a part tutorial, part journey diary and part personal reflection of my experience.

Let's delve in.

Hardware is really hard.

Our plan was simple,

Get the hardware (that we received from NUSHacks) up and running

Set up a microcontroller

SSH into it

Connect our camera, microphone and speaker sensor.

Write a script that interacts with the SOTA models through their respective API endpoints

Add a button to detect user input

and voila!

Well if it was that simple, our 24 hours would've been about 4 and this blog would be really boring.

Turns out hardware is really hard. Murphy must have been using an ESP32 camera module while he came up with his law

Everything that can go wrong will go wrong

because it did.

For starters, our Raspberry Pi 4 didn't work. Unfortunately we operated under the impression that it could work for about 12 hours. Shrivardhan and Sparsh used all their hardware skills to debug a Pi with faulty RAM while Manas and I researched and wrote a Python script to benchmark the response time for our solution on our laptops.

Quick note on our survey of Multimodal AI:

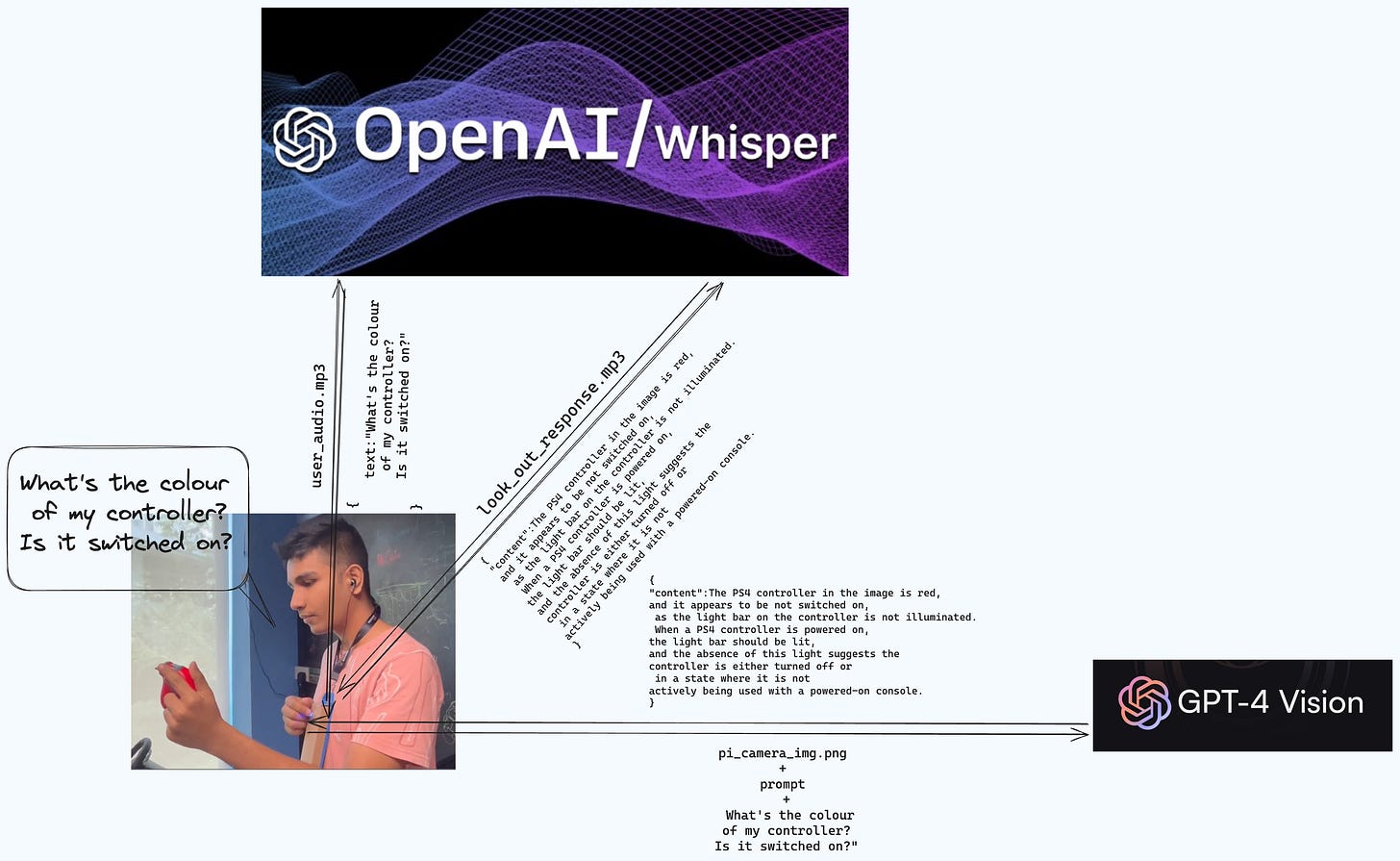

Text to speech and speech to text appears to be a solved problem. While there are solutions like DeepGram that can do this near instantly, using OpenAI's whisper also worked just as well. Even without any streaming, we were consistently getting 5-10 seconds of audio converted to text in 3s, and vice versa in 1.5s.

The main time constraint came from the text to image model. We did try Llava (https://llava.hliu.cc), Google's Gemini, Alibaba's Qwen-VL apart from OpenAI's GPT 4 Vision. While most of the models worked just about fine for any "Describe the scene" category of prompts, the speed of GPT 4 Vision as well as it's ability to handle specific prompts made us pick it.

We did come to the conclusion that our solution could be much faster and better if we didn't solely rely on these LLMs (more on that later)

Concurrency is magical

Couple of hours in and our hardware counterparts were racking their brains, switching in and out different Pi's, flashing Raspbian OS multiple times on what seemed like a never ending supply of SD cards, with Sparsh even running all the way from Clementi to get parts.

Meanwhile, we agree to move forward with this broad architecture.

In short,

User (speech) --Whisper-STT--> Text -> Picture clicked(Image) --GPT-4-Vision--> Answer(text) --Whisper--TTS--> Answer(played in User's earphones) (yay!)

And by evening we had a working prototype on our laptops. The only problem was that stringing these APIs sequentially gave us a response time of about 30-40s. Empathising for a moment, if a visually impaired person were to use this product, that is an awful lot of time spent waiting for a response; especially if you might have to retake a picture at a better angle.

This had to be solved, and Shrivardhan and Manas had a great idea. Concurrency! For speech to text and text to speech, we batch processed each sentence and run two threads one for calling the Whisper API and the other for playing/recording audio. This cut our processing time in half. We even clicked a picture while the user was talking, which takes a surprising amount of time on a Raspberry Pi (using the libcamera-still command).

A tryst with ESP32

It's 10:00 PM, we have dinner (which was great, thanks sponsorship money!) and recuperate.

Our current situation is:

- The Raspberry PI 4 is dead (RIP)

- Google's AI Voice kit, (which contained our speaker, microphone and their drivers) has no support for it's Android app, the included Pi Zero doesn't load up and its modified Raspbian doesn't allow anybody to SSH into it.

- Sparsh mentioned using his Node MCU as a replacement and we found a ESP32S3-CAM camera module with microphone lying around.

We were almost halfway through and didn't have any hardware that worked, which was slightly problematic since our entire demo was based on a hardware device. So we took a call and decided, two of us would work on this alternative solution and the other two would focus on getting our own Raspberry PI 3 up and running.

And thus began the journey where Manas and my JavaScript and Python experience was severely challenged by ESP32 modules and Arduino C++. This is where I actually really appreciated my Digital Logic and Computer Architecture course, which along with Sparsh's debugging skills was the only thing that gave me an understanding of what was going on.

4 hours in, and so far we've only gotten the camera to turn on, speaker to connect and play Rick Astley's Never gonna give you up in monophonic form.

Meanwhile, Shrivardhan barges into the Maker's studio where our setup is and yells, "I got the RPi to work!"; and so, our tryst with the ESP32 modules comes to an end.

Closing thoughts

To cut the next 6 hours short, we worked through the night, getting the camera and speaker(now earphones) to work. Eventually we used Sparsh's RODE USB microphone, which was inconspicuously attached to our demo table as our microphone input.

And while we had our hardware scares, when audio failed at 9AM, the mobile hotspot that connected our RPi and laptop through SSH failed 10 minutes prior to the demo being a few.

It worked.

And that's what it all came to. We had 24 hours and an idea; we spent all of our collective efforts into making this one idea work and it did.

And that's all that mattered.

P.S. It’s all open-sourced on Manas’ GitHub, and here’s a demo video of it working